A Selection of Links and Articles Related to Reproducible Research

Table of Contents

- Selection of Articles I particularly enjoyed

- Blogs or websites

- Articles/Conferences

- Tools

- Scientific instruments

- Publication model

- Reconciling HPC/Big Data with reproducible research

- Statistics

Sitemap

I've been interested by reproducibility since several years now and I have collected a bunch of links or articles that inspired me. After Konrad Hinsen pointed me out that several of these articles were not always publicly available, I decided to make this list public with pointers to "freely usable URLs". I'm not sure the sorting is really relevant but people may find interesting subjects

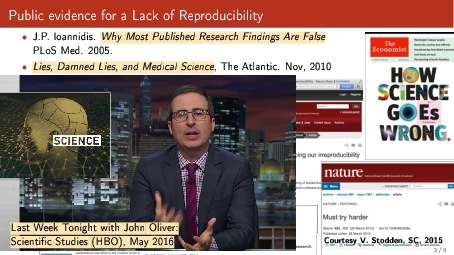

If you want a short introduction/motivation to this topic, you may want to have a look at the slides I prepared for the Inria days in June 2016 and which are riddled with pointers.

I organize a series of webinars on this topic, where I invite colleagues to expose their view on specific subjects. You'll find many useful information in there.

Selection of Articles I particularly enjoyed

- The Truth, The Whole Truth, and Nothing But the Truth: A Pragmatic Guide to Assessing Empirical Evaluations. I particularly like Figure 10 and Section 7 that summarize quite well what I feel is happening in our community regarding publication bias.

- A manifesto for reproducible science in Nature.

- The Dagstuhl Manifesto on Engineering Academic Software

- Should computer scientists do more experiments? (16 excuses to avoid experimentation). A 20 years old paper with pretty sound remarks that are still completely relevant to day!

- Top 10 Reasons to Not Share Your Code (and why you should anyway) (Randall J. LeVeque)

- Producing wrong data without doing anything obviously wrong! (Amer Diwan et al.). A good example of bias induced by pseudo-replication in computer performance measurement.

- Replicability is not reproducibility: Nor is it good science (Drummond)

- From Repeatability to Reproducibility and Corroboration (Feitelson)

- "Measuring reproducibility in computer systems research" (Collberg et al.) (website) A very nice meta-study about the state of reproducibility in our community.

- Implementing Reproducible Research edited by Victoria Stodden, Friedrich Leisch, Roger D. Peng. This book is somehow a follow up of the AMP workshop mentionned later on. It provides with many interesting references and point of views. Unfortunately, the collection of chapters by different authors leads to some overlap and lacks a somehow unified view/presentation. Still, this is an interesting effort that provides a lot of pointers and related work.

- The Canon: a collection of readings on experimental evaluation and "good science".

- 10 Simple Rules for the Care and Feeding of Scientific Data. Not much content here but simple "rules" for those who would need to be remembered the value of data…

- Must science be testable? by Massimo Pigliucci in aeon.co

- How computers broke science – and what we can do to fix it. I like the content but not much the title though.

- Towards Yet Another Peer-to-Peer Simulator and The State of Peer-to-peer Network Simulators (Naicken et. al)

- Lightning Talk:”I solemnly pledge” A Manifesto for Personal Responsibility in the Engineering of Academic Software

Blogs or websites

General discussions

- Konrad Hinsen's blog

- Andrew Davison tutorial, which is full of interesting references

- http://reproducibleresearch.net/index.php/Main_Page (did not visit this one in a long time)

- http://wiki.stodden.net/ICERM_Reproducibility_in_Computational_and_Experimental_Mathematics:_Readings_and_References

- http://www.ploscompbiol.org/article/info:doi/10.1371/journal.pcbi.1003285

- http://collective-mind.blogspot.fr/2015/02/new-years-digest-on-collaborative.html

- https://github.com/Daniel-Mietchen/datascience/blob/master/reproducibility.md

"Political" thoughts

General discussions about scientific practice

Articles/Conferences

Webinar

Webinars

The ones I organize with colleagues: https://github.com/alegrand/RR_webinars/blob/master/README.org

Summer school

I co-organized a Summer school on Performance Metrics, Modeling and Simulation of Large HPC Systems, in which I gave a tutorial on best practices for reproducible research. This tutorial was recorded and made available:

Coursera

The series of lectures that can be found on Coursera from Johns Hopkins University on Data Science is not bad. In particular Roger D. Peng's lecture on reproducible research had several interesting refs. Unfortunately this series of lectures now seems to be charged. :(

Conferences and events (roughly sorted by date)

- Workshop on Duplicating, Deconstructing and Debunking (WDDD) (2014 edition). A very early workshop around this subject.

- http://evaluate2010.inf.usi.ch

- Reproducible Research: Tools and Strategies for Scientific Computing (the AMP workshop by V. Stodden… this website provided me with a great and up-to-date list of references and tools)

- Working towards Sustainable Software for Science: Practice and Experiences (a SC workshop that started in 2013)

- REPPAR'14: 1st International Workshop on Reproducibility in Parallel Computing (Sascha Hunold, Lucas Nussbaum, Mark Stillwell and myself organized this workshop, which targets the parallel computing community)

- Artifact evaluation in the ESEC/FSE conference.

- TRUST 2014 (organized by Grigori Fursin)

- Reproducibility@XSEDE: An XSEDE14 Workshop

- Reproduce/HPCA 2014

- http://vee2014.cs.technion.ac.il/docs/VEE14-present602.pdf

- 1st workshop on Reproducibility of Computation Based Research: Languages, Standards, Methodologies and Platforms (Joint event with NTMS15).

- REPPAR'15: 2nd International Workshop on Reproducibility in Parallel Computing (Sascha Hunold, Lucas Nussbaum, Mark Stillwell and myself organized this workshop, which targets the parallel computing community)

- 1st Workshop on E-science ReseaRch leading tO negative Results (ERROR)

- …

- R^4 workshop at Orléans: see my notes here

- Mini symposium "Recherche reproductible" - CANUM 2016

- Christophe Pouzat , MAP5, Université Paris-Descartes: Une brève histoire de la recherche reproductible et de ses outils

- Jean-Michel Muller , LIP (Laboratoire de l’Informatique du Parallélisme): Arithmétique virgule flottante : la lente convergence vers la rigueur

- Fabienne JÉZÉQUEL, LIP6 (Laboratoire d’Informatique de Paris 6): Estimation de la reproductibilité numérique dans les environnements hybrides CPU-GPU

- Enric Meinhardt-Llopis, ENS Cachan: IPOL : Recherche reproductible en traitement d’images. A great talk!

- Talk on “Open access publishing: A researcher’s perspective” by Karl Broman. I really like the first part of the story where he desperately tries to access some paper.

- http://ccl.cse.nd.edu/workshop/2016/ from the Notre Dame group of Douglas Thain

- http://www.cs.fsu.edu/~nre/ in conjunction with SC16

A special issue of ACM SIGOPS on Repeatability and Sharing of Experimental Artifacts

- A Roadmap and Plan of Action for Community-Supported Empirical Evaluation in Computer Architecture (Childers, Jones, Mossé)

- Raft Refloated: Do We Have Consensus? (Howard, Schwarzhopf, Madhavapeddy Crowcroft)

- Automated Assessment of Secure Search Systems (Varia, Price, …)

- From Repeatability to Reproducibility and Corroboration (Feitelson)

- Locating Arrays: A New Experimental Design for Screening Complex Engineered Systems (Aldaco, Colbourn, Syrotiuk)

- Conducting Repeatable Experiments and Fair Comparisons using 802.11n MIMO Networks (Abedi, Heard, Brecht)

- The dataref versuchung (Dietrich, Lohmann)

- An Effective Git And Org-Mode Based Workflow For Reproducible Research (Stanisic, Legrand, Danjean)

- An introduction to Docker for reproducible research (Boettiger)

- Reconstructable Software Appliances with Kameleon (Ruiz, Harrache, Mercier, Richard)

- Creating Repeatable Computer Science and Networking Experiments on Shared, Public Testbeds (Edwards, Liu, Riga)

Tools

Tools I use on a daily basis

Tools I've looked at and which I don't use but have a good potential

There are many python tools

- The Ipython notebook or rather the new version jupyter

- http://pandas.pydata.org/ that provides data frames and basics of R linear regressions

- http://scikit-learn.org/stable/ that provides many machine learning tools

The truth is at the moment I prefer to stick with R because I'm more familiar with it and because it has the lead on many things, in particular in term of expressiveness. Take for example graphics:

- On the R side, there is ggplot2, which is extremely expressive

- On the python side, there is matplotlib, which is by comparison very low level. It can provide very nice graphics but it's way less expressive.

- And I'm not the only one to think this. See for example http://blog.yhathq.com/posts/ggplot-for-python.html. That's why there has been several "ports" or reimplementations. In any case, the Python community is likely to "win" in the end with excellent tools and a "one language to rule them all" approach but for the moment, I'll stick to my combination of specialized languages/tools that I master quite well. :)

Tools I stumbled upon and that have interesting features

Sectionning is difficult as projects span different aspects.

Logging and

- ActivePapers (Tutorial). Not sure this is the right section though…

- Sumatra (A great idea by Andrew Davison, which definitely inspired some of our methodology based on git and org-mode)

- Burrito is a tool that monitors and logs your activity so that you can possibly come back to previous situations.

Packaging

- CDE (slides from the AMP workshop) and CARE are tools that help you packaging code so that it can be rerun by others.

- There is also a recent effort (by the vistrails team) called ReproZip that trace dependancies and files and relies on VM and Vagrant to reproduce the environment.

Experiment management:

- Vistrails (A workflow engine, which Sascha Hunold tried as an alternative to my uggly combo of scripts/Makefiles. Definitely interesting.)

- 3X: A Workbench for eXecuting eXploratory eXperiments (never used it though). It looks like a mixture of rip APST with a code packaging tool and some visualization infrastructure. Somehow, it's related to Sumatra (with much more parallelism and way less journaling). It's crazy to see how we all reinvent the wheel. :(

Analysis platforms

- Undertracks (ad hoc platform initially designed at the LIG for studies on technology-enhanced learning systems).

- Framesoc and a trace archive (I'm somehow involved in these projects).

Shared Storage Infrastructures

Proprietary tools :(

- The Elsevier approach

- ResearchGate (not really about reproducibility but more about open reviews, which is also an important/interesting topic)

- IBM ManyEyes project

- RunMyCode. This project was initially meant to allow to re-run the code of articles but is now merely a data/src hosting platform. Konrad Hinsen explained me there had been a fork and that the execution part of this project had now moved to the execandshare project.

- http://f1000research.com/. Something I particularly like is the open review principle. Look for example on the right of http://f1000research.com/articles/3-289/v3/ On this topic, one may be interested by https://opennessinitiative.org/.

Other interesting publishing sites

Workflow tools

Scientific instruments

Publication model

- Stop the deluge of science research :)

- Shared reviews against predatory journals:

- Worried about copyright issues and journals annoying you when making all your work freely reproducible? I enjoyed Lorena Barba's experience report about this (last question).

- Distill : a moderne Machine learning journal ?

- Rogue approaches to scholarly communications

- Allowing new kind of papers that combine the flexibility of "basic research" with the rigour of clinical trials.

Reconciling HPC/Big Data with reproducible research

Why should I believe your supercomputing research ?

Confidence in an instrument increases if we can use it to get results that are expected. Or we gain confidence in an experimental result if it can be replicated with a different instrument/apparatus. The question of whether we have evidence that claims to scientific knowledge stemming from simulation are justified is not so clear as verification and validation.